DIC Theory Overview¶

Digital Image Correlation (DIC) is a technique for measuring deformation, displacement, and strain fields by analyzing a sequence of images captured during a loading process. At its core, DIC tracks the movement of patterns and textures between images, and from this motion, deduces how the material has deformed.

Local Subset DIC¶

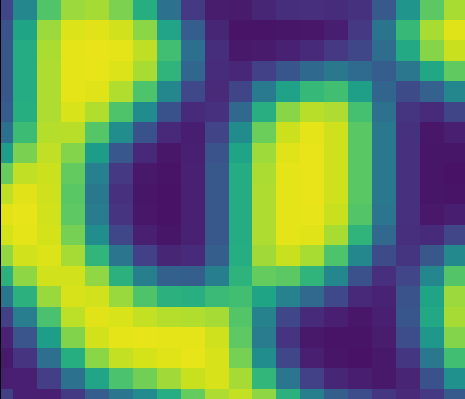

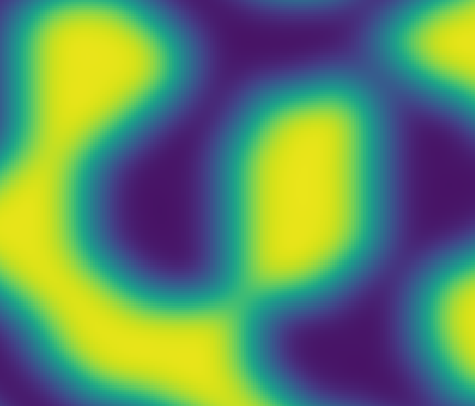

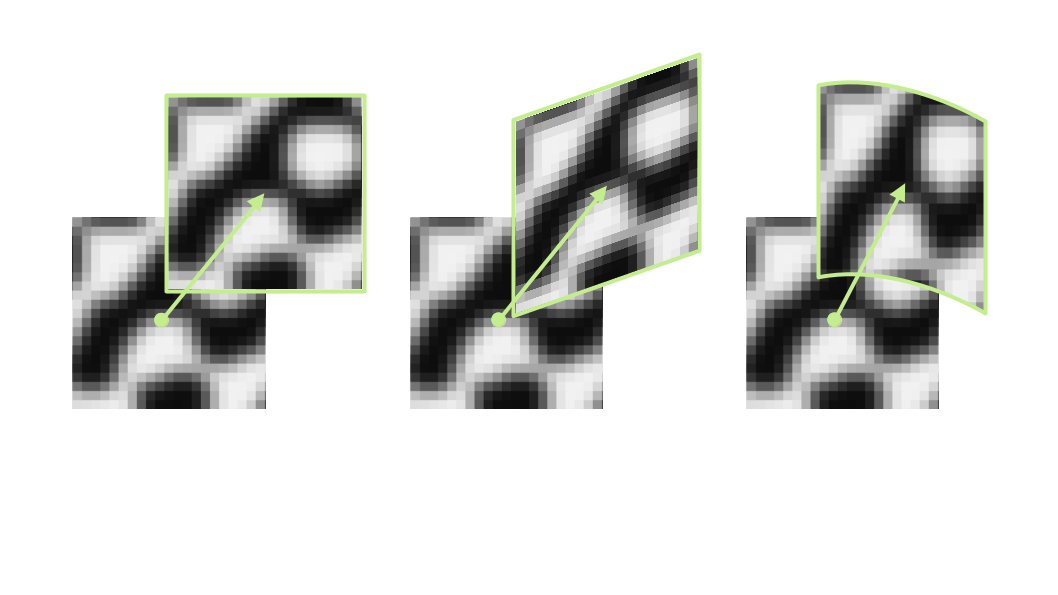

In Local subset DIC, images are divided into small \(N \times N\) pixel regions which are called subsets. By comparing the intensity patterns of these subsets across images, we can estimate their displacement. The image below shows the difference (or cost), where 0 is a perfect match, for a brute force scan along the x-axis.

Typically we don’t use a brute-force approach, but instead use an optimization algorithm that is much more computationally efficient. The optimization attempts to minimize the difference between the reference and deformed subset by using the gradient. You can see from the brute-force approach that there are local minima where the optimizer might get stuck, so it’s important to ensure that any initial guess is reasonably close to the actual parameters that define the mapping from the subset in the reference image to the subset in the deformed image.

Shape Functions¶

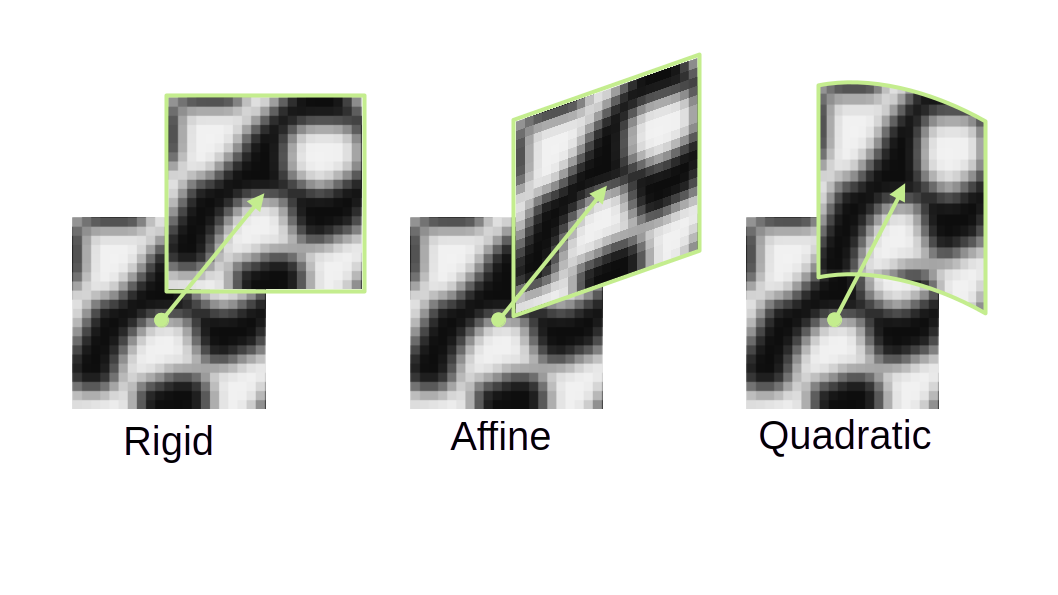

It’s often the case that a subset undergoes more complex deformation than just rigid translation between reference and deformed image. To model how a subset deforms more generally, we introduce shape functions. These are mathematical mappings that describe how points inside a subset move relative to each other. Pyvale supports three commonly used shape functions in DIC. The simplest of course is a pure rigid translation (two parameters: horizontal and vertical shift). After this comes affine and quadratic shape functions:

Each higher-order shape function includes all terms from the lower-order functions: affine includes rigid terms, and quadratic includes both affine and rigid terms.

Cost Functions / Correlation Criterion¶

How do we decide if two subsets match? To do this we use what’s known as a cost function, or often referred to as a Correlation Criterion. This is a numerical measure of similarity between the reference subset and the deformed subset. Pyvale supports three choices:

SSD¶

NSSD¶

ZNSSD¶

where \(f(x_{i},y_{i})\) and \(g(x_{i},y_{i})\) represent the gray-level intensity values at location \((x_{i},y_{i})\) in the reference and deformed images. \(\bar{f}(x_{i},y_{i}) = f(x_{i},y_{i}) - f_m\), and \(f_m\) is the mean gray-level value of the subset.

Cost Function Optimization¶

Minimizing the value of the cost function requires using an optimization routine. These algorithms start with an initial guess and refine it step by step. Convergence depends on a good initial guess and a generally well-behaved cost surface. Pyvale uses a Levenberg-Marquardt non-linear optimization routine to minimize the cost function. The algorithm proceeds iteratively by updating the shape-function parameter vector \(\mathbf{p}\). At iteration \(i\), the parameters are updated according to

where \(\nabla C(\mathbf{p}_{i})\) denotes the gradient of the cost function evaluated at the current parameters. The matrix \(\mathbf{H}\) is an approximation to the Hessian, taken here as \(\mathbf{H} \approx \mathbf{J}\mathbf{J}^{\mathsf{T}}\), with \(\mathbf{J}\) the Jacobian of the residuals with respect to \(\mathbf{p}\). The scalar \(\lambda > 0\) is the Levenberg–Marquardt damping factor, which controls the balance between gradient-descent and Gauss–Newton behavior.

After computing the updated parameter vector \(\mathbf{p}_{i+1}\), the cost function is re-evaluated to assess the quality of the update. Based on the resulting change in error, the damping parameter \(\lambda\) is adjusted. If the cost increases, don’t update the shape function parameters and increase the damping by a factor of 10. If the cost decreases, the update is accepted and the damping is reduced by a factor of 10. This update–evaluation–adjustment cycle is repeated until convergence criteria based on both parameter change and cost-function reduction are satisfied. The magnitude of the parameter update is quantified by

where \(\Delta\mathbf{p} = \mathbf{p}_{i+1} - \mathbf{p}_{i}\), and \(\langle \cdot,\cdot \rangle\) denotes the Euclidean inner product. From these quantities, the relative parameter-update tolerance is defined as

with \(\varepsilon\) a small regularizing constant included to avoid division by zero. Convergence in terms of the cost function is measured using

Iteration terminates once both \(x_{\text{tol}}\) and \(f_{\text{tol}}\) fall below user-specified thresholds, indicating that further updates would produce only negligible changes in the parameters and the cost function.

Sub-pixel Accuracy With Interpolation¶

DIC aims for precision beyond the integer values defined by the image’s pixel grid. Integer-pixel matching is a good start, but physical displacements do not perfectly map to pixel boundaries. To capture this, we refine the measurement to sub-pixel accuracy using interpolation. Instead of treating the image as a discrete array, we approximate it as a smooth surface. This is done using cubic B-spline interpolation.

Cubic B-spline Interpolation¶

Cubic B-spline interpolation represents the interpolated function as a weighted sum of shifted B-spline basis functions:

where \(c_{i,j}\) are B-spline coefficients and \(\beta^3(t)\) is the cubic B-spline basis function:

Prefiltering¶

To ensure that the B-spline interpolation passes through the original intensity values, we can apply a filter to get the B-spline coefficients. For a 1D row of intensity data \(\{f_0, f_1, \ldots, f_{N-1}\}\), the filtering has three steps. First is to apply a normalization:

with pole \(z = \sqrt{3} - 2\). Then apply a causal filter:

with an initial condition of \(c_0^{(+)} = c_0^{(0)}\). The final step is to apply an anticausal filter. Starting at the end of the row:

and then applying:

This 1D filtering can be done for each row of the image to get a list of coefficients \(c_{i,j}^{(x)}\). It can then be applied to the columns of the result to get a full list of coefficients \(c_{i,j}\).

Evaluation¶

To get the intensity value at an arbitrary subpixel location \(f(x,y)\) we compute the local coordinates:

then evaluate the basis functions for the local coordinates:

and compute the interpolated value:

Reliability-Guided DIC (RG-DIC)¶

It’s highly likely that some subsets will poorly correlate due to texture changes, cracks, noise, changes in lighting, or large local deformations. Reliability-Guided DIC (RG-DIC) was a method developed by B. Pan (2009) that helps to limit the amount of poor results by correlating subsets in an order determined by the magnitude of the correlation coefficient. The algorithm proceeds as follows:

The user selects an initial seed location. Correlation is performed at the seed location and its 4 neighboring points. These points are marked as computed in a global mask.

The four points are added to a queue ordered from highest correlation coefficient to lowest.

The point at the top of the queue is removed. Correlation is then performed for its uncomputed neighbors. Successful correlations from previously computed neighboring subsets are used as initial conditions for the optimization routine.

Newly computed points are updated in the global mask and added to the queue.

The algorithm then expands outward in a front-propagation style until all subsets have been computed.

Strain Calculations & Formulations¶

The in-plane strain components can be calculated using the spatial derivatives of the displacement field to capture local changes in geometry. The 2D deformation gradient matrix, \(\mathbf{F}\), is given by:

To calculate the partial derivatives above, we compute the gradient over a square window containing \(N \times N\) displacement data points from the DIC calculation. Because we are using displacement values from DIC, the strain window is dependent on the subset-step, \(s\), and subset-size, \(w\). These quantities, along with the size of the strain window, form what is typically referred to as the Virtual Strain Gauge, \(VSG\):

Due to the noise in DIC measurements, smoothing is typically applied over the strain window. We support bilinear and biquadratic smoothing over the strain window elements. The polynomial approximation is given by:

where \(\boldsymbol{u} = [\,u_x\;u_y\,]^{T}\), \(\mathbf{P}(x,y)\) is a row vector of basis terms, and \(\mathbf{c}\) is a column vector of coefficients. The polynomial basis is:

The coefficients are obtained by solving a linear least-squares problem, since the displacement field is linearly parameterized in the unknown coefficients. Once the polynomial coefficients have been obtained, the deformation gradient tensor and strain can be calculated. We currently support the following strain tensor formulations:

strain_formulation="HENCKY", Hencky (logarithmic): \(\boldsymbol{\varepsilon}=\ln(\sqrt{\mathbf{F}^\mathsf{T}\mathbf{F}})\)strain_formulation="GREEN", Green–Lagrange: \(\boldsymbol{\varepsilon}=\tfrac{1}{2}\left(\mathbf{F}^\mathsf{T}\mathbf{F}-\mathbf{I}\right)\)strain_formulation="ALMANSI", Euler–Almansi: \(\boldsymbol{\varepsilon}=\tfrac{1}{2}\left(\mathbf{I}-(\mathbf{F}\mathbf{F}^\mathsf{T})^{-1}\right)\)strain_formulation="BIOT_LAGRANGE", Biot (right / Lagrangian): \(\boldsymbol{\varepsilon}=\sqrt{\mathbf{F}^\mathsf{T}\mathbf{F}}-\mathbf{I}\)strain_formulation="BIOT_EULER", Biot (left / Eulerian): \(\boldsymbol{\varepsilon}=\sqrt{\mathbf{F}\mathbf{F}^\mathsf{T}}-\mathbf{I}\)

where \(\mathbf{I}\) is the \(2 \times 2\) identity matrix.